GSA SER Verified Lists Vs Scraping

The Core Difference Between GSA SER Verified Lists and Scraping

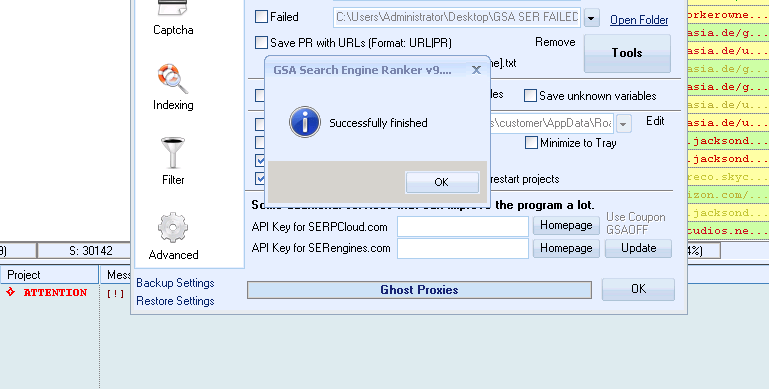

When building links with GSA Search Engine Ranker, the data source you feed into the tool defines your entire campaign. Two camps have formed click here around this need: those who rely on purchasing or compiling GSA SER verified lists and those who scrape targets fresh from the web in real time. Understanding the practical difference is essential before you waste time or money on the wrong approach.

What Are GSA SER Verified Lists?

A verified list is a pre-built collection of URLs, typically thousands or millions, where each entry has already been tested to accept registrations, submissions, or content posts. These lists are categorized by platform type—article directories, web 2.0 properties, wikis, forum profiles, trackbacks, and more. The key promise is that the targets are ready to use because someone already ran the identification and registration test.

The Real-Time Scraping Alternative

Scraping bypasses static lists entirely. You configure GSA SER’s built-in search engine scraper with footprints and keywords, and the tool harvests fresh targets from Google, Bing, and other engines on the fly. Every URL pulled is new and unique to that moment, shaped by your specific niche keywords and platform footprints. This approach is entirely dynamic.

Speed and Efficiency: Stored Data vs Live Discovery

With GSA SER verified lists vs scraping, the speed battle isn’t always clear-cut. A verified list allows instant processing because the tool doesn’t need to query search engines or wait for page loads to identify a target type. You point the campaign at the list file, and submission attempts start immediately. However, a large percentage of entries in any static list will be dead—domains expire, platforms update their software, or the registration page gets blocked. That means your submission loop wastes threads on failed connections and 404 errors.

Scraping, on the other hand, has an upfront time cost. Each search query consumes proxy bandwidth and introduces latency. But every harvested target that passes the platform identification stage is currently live. You’re not burning time on dead domains, so the net ratio of successful submissions to total runtime often tilts in favor of scraping for long-running, maintained campaigns.

Proxy and Resource Consumption

Scraping demands high-quality proxies that aren’t banned by the search engines. You’ll burn through bandwidth rapidly, and if your proxies are slow, campaigns stall. Verified lists appear cheap on proxy usage because the tool skips the harvesting phase entirely. But if you send submissions to 100,000 targets from a list and half are dead, you’re still wearing out proxies on failed post attempts. There is no true proxy-free method; the consumption simply shifts from search scrapes to failed submission retries.

Link Quality and Footprint Risks

One of the most dangerous aspects of using shared verified lists is the footprint problem. A list that gets sold to hundreds of users generates identical link profiles across countless domains. When search engines crawl a cluster of sites all linking out from the exact same set of auto-approved platforms with predictable anchor patterns, the network becomes trivial to devalue. Scraping with niche-specific footprints yields a far more varied backlink profile because your harvested targets are a snapshot of what existed for your specific keywords at that particular hour.

Platform Diversity and Niche Relevance

The GSA SER verified lists vs scraping debate becomes sharper when you consider niche alignment. A generic verified list holds everything—a random mix of gaming forums, pet blogs, construction directories, and language-specific platforms that have zero topical connection to your money site. Scraping with your own keywords produces targets that at least carry some contextual relevance, because you’re pulling URLs related to your niche terms. The actual page may still reject your submission, but the starting pool is contextually filtered. For tier-2 and tier-3 link building, this distinction matters less, but for any tier that touches your site directly, relevance can’t be ignored.

Maintenance and Freshness Over Time

Verified lists decay rapidly. A list from six months ago will have a significant dead rate. To keep a list valuable, you must continuously re-verify and prune it, which is a separate maintenance burden. Scraping introduces fresh targets every single day without manual curation. The tool’s engine adapts as search results change. However, scraping relies on the search engines not blocking your queries aggressively, which has become harder over time with CAPTCHAs and IP bans. A hybrid mindset has emerged among experienced users: maintain a core of hand-verified, high-success-rate targets while letting scraping fill the gap with fresh discovery.

The Cost Equation

Buying a verified list is a one-time or subscription cost and appears cheaper than the ongoing proxy and CAPTCHA solving fees required for scraping. Over months, however, the scraping cost buys you thousands of live, unique backlinks, whereas a static list eventually yields diminishing returns. For low-volume testing or quick bursts, verified lists provide instant value. For consistent link velocity and diversity, scraping wins.

When to Use Each Method

Use verified lists if you are brand new, need to see immediate output, and want to understand GSA SER’s submission flow without the complexity of proxy rotation and footprint configuration. For short-term projects or churn-and-burn tiers, lists can be adequate. Switch to scraping when you have a stable proxy infrastructure, have mastered captcha solvers, and need to build links that don’t leave an obvious, mass-produced footprint. Most serious users end up running both in parallel, feeding the tool static verified targets for high-availability platforms while scraping fresh, niche-relevant URLs to broaden the profile.

Ultimately, the choice between GSA SER verified lists vs scraping is not a binary one. It’s about resource allocation, risk tolerance, and how much of your link graph you’re willing to have overlap with every other person who bought the same list.